AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Robots txt no index9/9/2023  If you forbid indexing on a URL with robots.txt, Google is not able to see the content of the page, thus no links on that URL will ever be seen and they will not be able to pass their link juice. If you don’t know how to handle it properly, you might be wasting a large amount of your link juice that could have flown to pages you actually want to receive it. As you may or may not know, even pages that are forbidden to be indexed via any means can still accumulate PageRank. When will the link juice be passed and when it will not.

Now that you understand the cases above, lets move on to something even more advanced. Advanced stuff, passing Page Rank in robots.txt and meta conflicts The page will not be shown in the search results. Meta noindex vs meta index conflict: If a single page has both meta noindex and meta index tags, meta noindex will resolve. Outcome: Page will be blocked by robots.txt, meta tag will never be seen by googlebot thus will be ignored, which will allow the page to be shown as snippet with no description in rare occasions in the search results. Outcome: Page will not be indexed and will not be shown in the search results at all.Ĭase 3: Both Robots.txt and meta tag forbid the indexing of a URL. Outcome: Page will be blocked by robots.txt, meta tag will never be seen by googlebot thus will be ignored, page will only be shown in results as a reference in very rare occasions (it will not have a description, only title and URL).Ĭase 2: Robots.txt allows indexing of a URL but meta tags forbids it. Robots.txt noindex vs meta noindex, which one has priority?Ĭase 1: Robots.txt forbids indexing of a URL but meta tag allows it. To make this issue a bit clearer to the general public, I decided to make this guide that will show you exactly what happens in these situations.

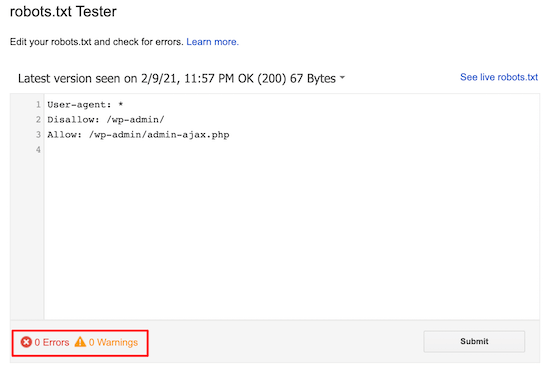

Host: romanus.Have you ever wondered what would resolve if your robtos.txt and your meta tags had conflicting directives regarding content indexing? Don’t worry, this question can be a real challenge to even very advanced SEOs. You don’t need to do thisĭisallow: /wp-admin # Close all service folders in CMSĭisallow: */trackback # Close URLs containing /trackbackĭisallow: */feed # Close URLs containing /feedĭisallow: */comments # Close URLs containing /commentsĭisallow: /?s= # Close the URL website searchĪllow: /wp-content/themes/RomanusNew/js* # Open only js fileĪllow: /wp-content/themes/RomanusNew/style.css # Open style.css fileĪllow: /wp-content/themes/RomanusNew/css* # Open only css folderĪllow: /wp-content/themes/RomanusNew/fonts* # Open only fonts folder With the bunch of uncovered garbage, pages can be indexed longer because they don't have enough crawling budget.ĭisallow: /wp-content/uploads/ # Close the folderĪllow: /wp-content/uploads/*/*/ # Open folders of pictures of a type /uploads/close/open/ĭisallow: /wp-login.php # Close the file. It is determined for each site individually. There is also such a thing as a crawling budget, which is a certain number of pages that the robot can scan at the same time. The main problem is that the search engine index gets something that shouldn't be there, like pages that don't bring any benefit to people and simply stuff up the search. There is no need to manage indexing on such websites.īut you don't have a simple business card website with a couple of pages (although such websites have long been created on CMS like Wordpress/MODx and others) and you work with any CMS (which means programming languages, scripts, database, etc.) ) - then you will come across such "trappings" as: If you have a website with bare HTML + CSS, that is, you manually convert each page to HTML, don't use scripts and databases (a 100-page website is 100 HTML files on your hosting), then just skip this article.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed